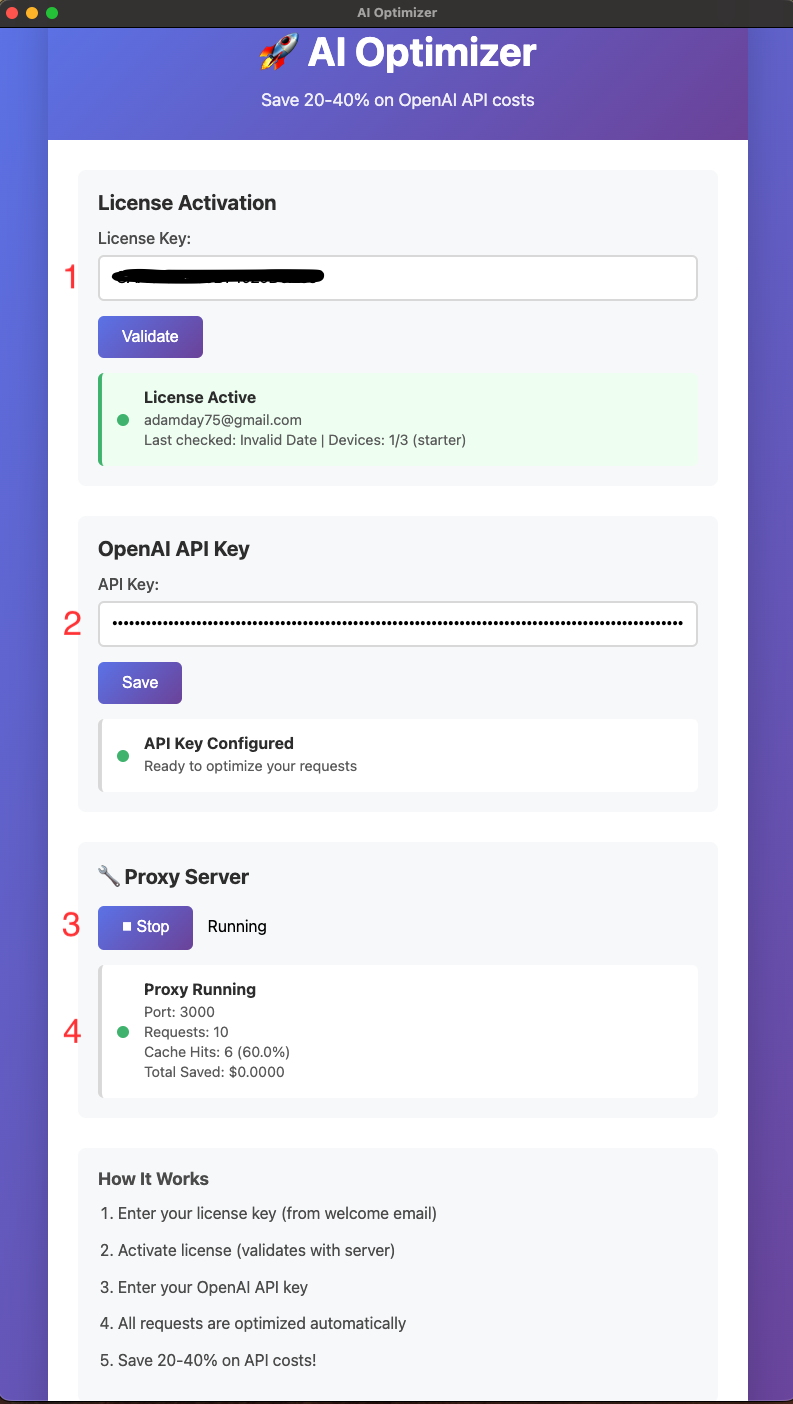

See the setup in one screen

AI Optimizer is simple to get running: enter your license, add your OpenAI API key, start the local proxy, and watch requests, cache hits, and hit rate update as your workflow runs.

1. Enter your license

Use the license from your welcome email to activate the app on your device.

2. Add your OpenAI API key

AI Optimizer uses your API key so requests can route through the local proxy first.

3. Start the proxy

Once running, AI Optimizer listens locally so your workflow can use it instead of calling OpenAI directly every time.

4. Confirm it’s working

Watch requests, cache hits, and hit rate update in real time so you can quickly verify that traffic is flowing through the optimizer.